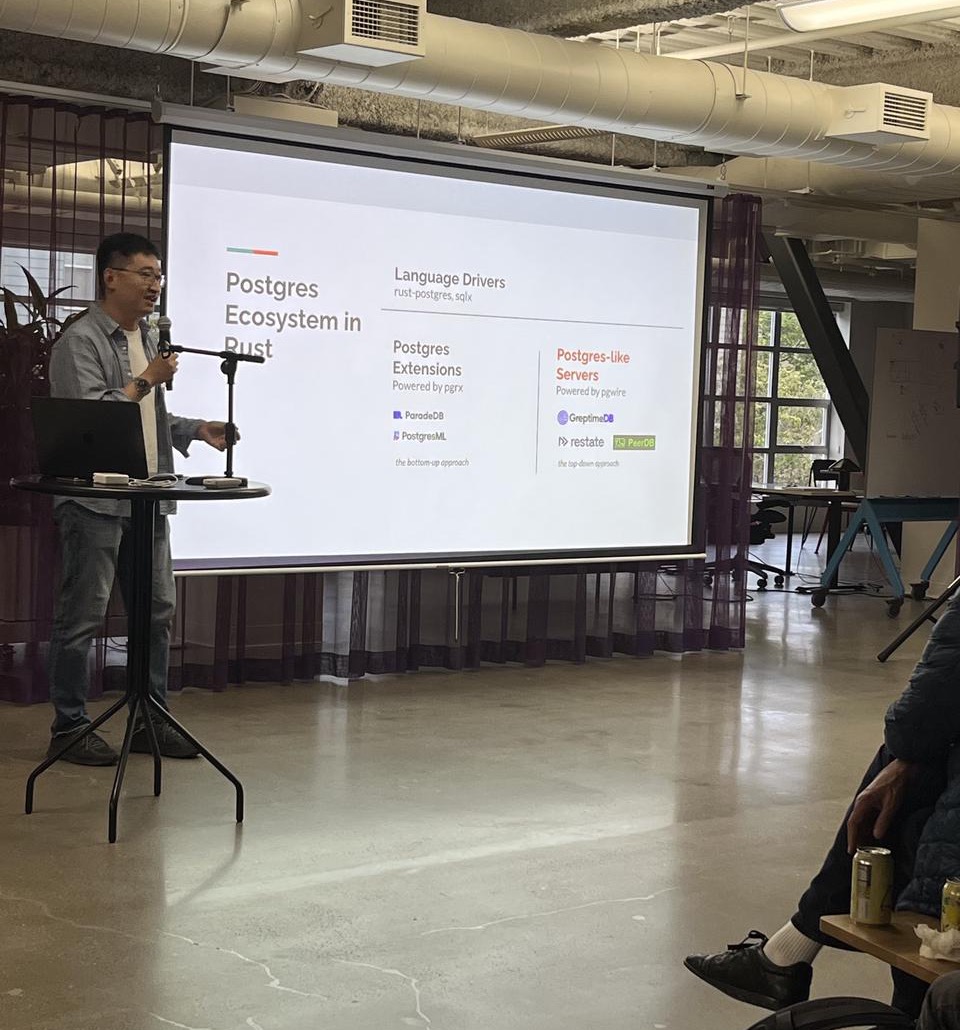

GreptimeDB has been adapting the Postgres protocol since its early versions. In 2025, with the acquisitions of Neon and CrunchyData, PostgreSQL has once again become a hot topic. Beyond the development of PostgreSQL itself, there are two paths within its ecosystem that bring in a larger world: what I call the "bottom-up" extension approach and the "top-down" protocol compatibility approach. Taking the combination with the Rust ecosystem as an example, the bottom-up approach primarily uses pgrx to introduce various Rust ecosystem libraries into PostgreSQL, represented by projects like ParadeDB. The top-down approach simulates its protocol and interfaces to build various "Postgres-like" databases. GreptimeDB places itself in the "top-down" category.

What is the Postgres Protocol?

In a narrow sense, the Postgres protocol refers to the application-layer wire protocol that runs over TCP to communicate with a Postgres server. This is what actually happens over TCP when we connect to a database using psql or a JDBC driver.

In summary, this protocol mainly consists of five parts:

- Startup: Handshake information exchange and various authentication mechanisms during connection establishment.

- Simple Query: Text-based queries and responses.

- Extended Query: Commonly known as PreparedStatement, supports caching query statements on the backend and transmitting only parameters.

- Copy: Used for importing and exporting data.

- Cancel: Cancels an executing query.

We are not including the database logical replication protocol and streaming replication protocol here, even though they use similar concepts and startup mechanisms.

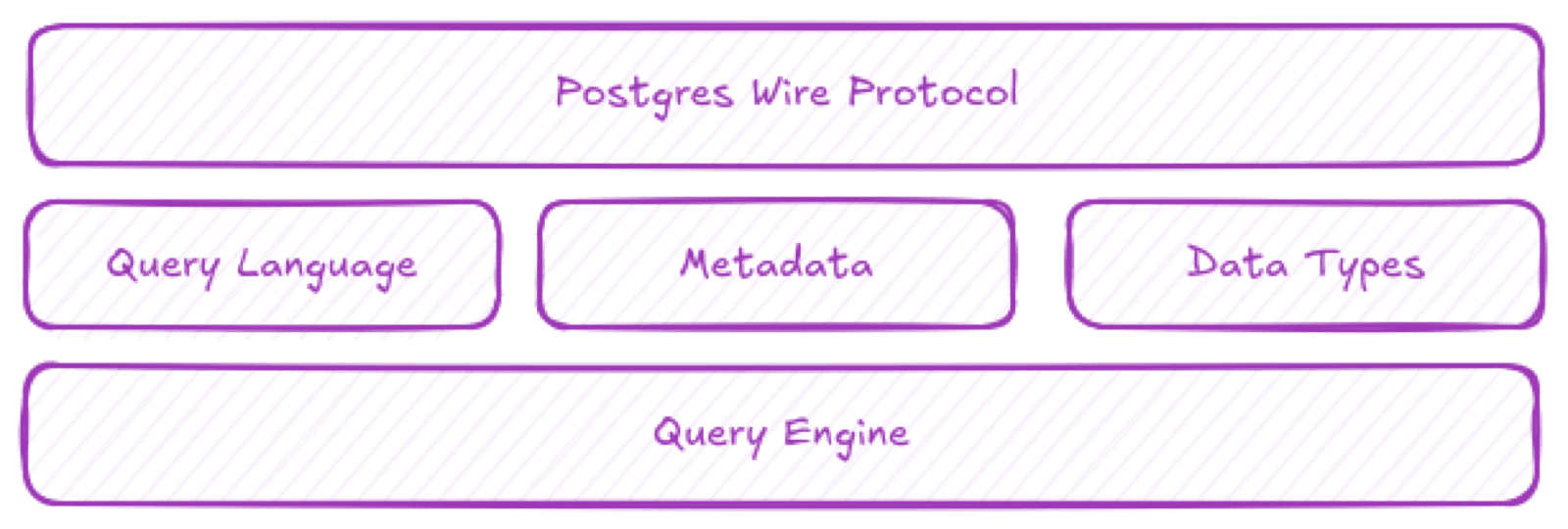

However, to achieve relatively complete Postgres compatibility, the narrow protocol is just the first layer. We also need to support the broader Postgres protocol, which includes:

- Query Language: Its SQL dialect and key functions.

- Data Type System: Establishing a mapping to Postgres's own type system.

- pg_catalog Metadata: Support for the metadata information system.

Layers of the broader Postgres protocol

Benefits of Postgres Protocol Compatibility

Compared to designing a new layer 4 protocol from scratch, using the Postgres protocol offers many benefits.

Proven Reliability: The current mainstream version 3.0 protocol has been running for over a decade and is thoroughly proven. It supports TLS, various authentication methods, and client negotiation. This protocol even fully supports streaming data returns, although this feature isn't utilized due to limitations of the Postgres process model.

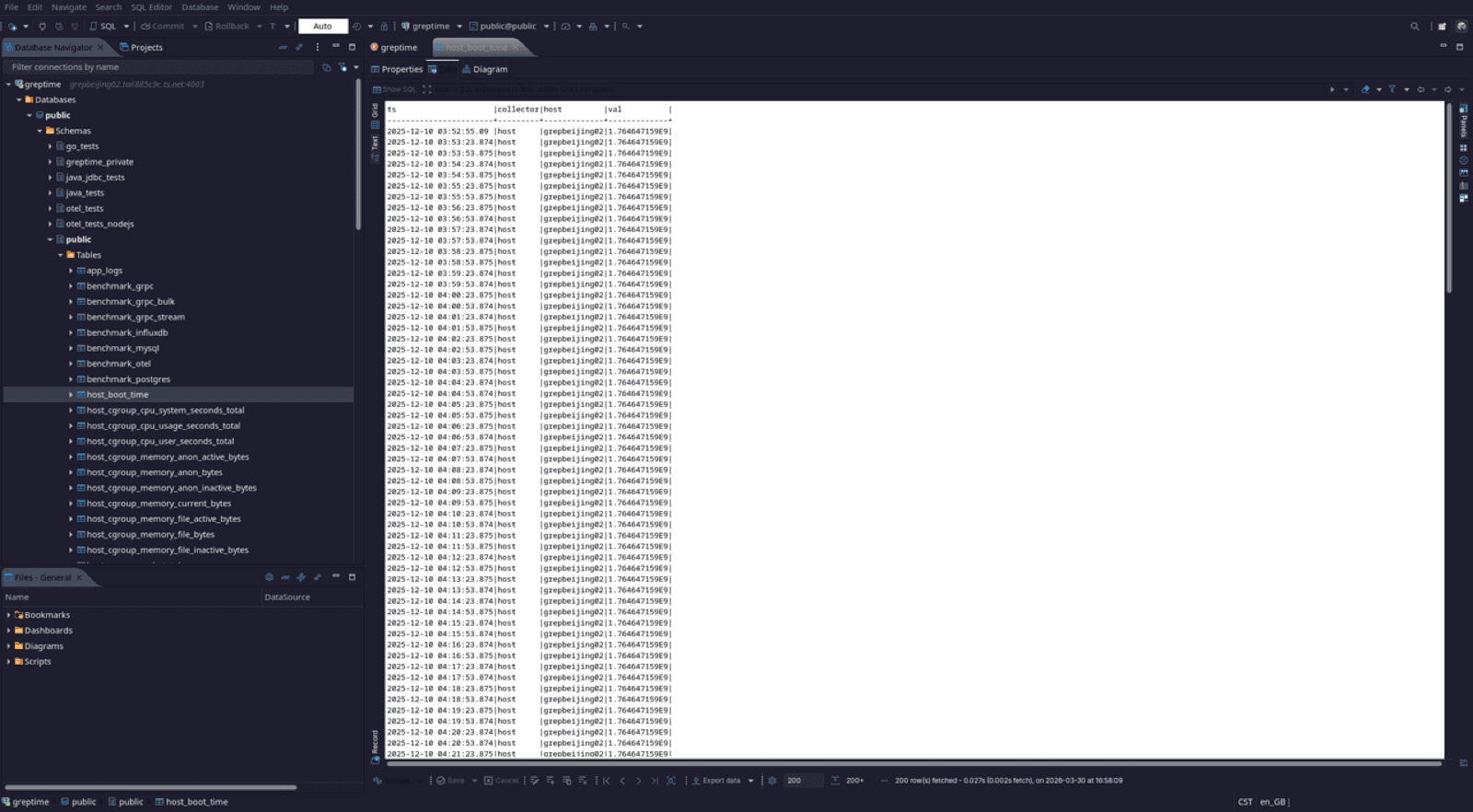

Unlocks a Wealth of Tools: It unlocks a wide range of programming language drivers, database management tools, and BI tools. Almost every mature programming language has a Postgres driver. By implementing narrow protocol support, you can build applications for GreptimeDB using these clients. Additionally, many database access and management tools, from the simple psql command line, to GUI tools like DBeaver, to various reporting tools, can directly manage and use a Postgres-protocol-compatible database as if it were Postgres. These tools often require more pg_catalog compatibility to allow clients to fetch metadata.

Enables Federated Queries as an FDW Data Source: By mounting GreptimeDB as an external data source for native Postgres using FDW (Foreign Data Wrapper), you can initiate SQL queries directly on native Postgres and even perform JOIN operations between data in both databases. This is ideal for scenarios like managing device metadata with native Postgres while handling time-series data with GreptimeDB.

Accessing GreptimeDB via DBeaver

Of course, the Postgres protocol also has some limitations:

Row-Oriented: The protocol is designed for row-oriented data structures. If the underlying data structure is column-oriented, conversion is required to return the raw data.

Cancel Query Mechanism: The query cancellation mechanism is tied to Postgres's process model, which can be cumbersome and has security risks.

Type Inference Requirement for Extended Query: Extended query requires the database to perform type inference for variable parameters in a statement without actually executing the query. SQLite and DuckDB lack sufficient APIs to implement this capability, thus they cannot fully support extended query.

How to Achieve Postgres Protocol Compatibility

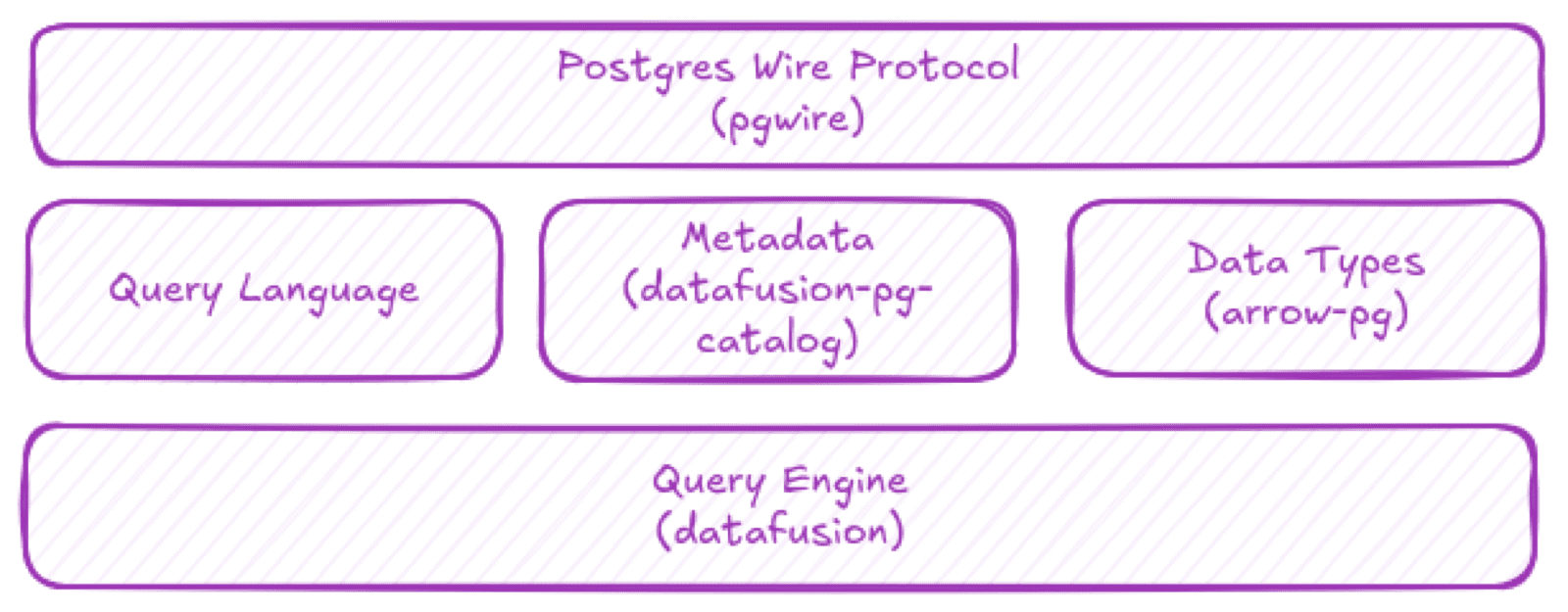

GreptimeDB uses pgwire, a library I authored, and the DataFusion ecosystem to achieve Postgres protocol compatibility.

GreptimeDB's Postgres compatibility stack

Transport Protocol Support

If we compare the Postgres protocol to the HTTP protocol (both being layer 4 protocols), pgwire acts like hyper or even the axum framework in the Rust ecosystem, helping users build servers compatible with the Postgres protocol. Users can selectively implement authentication, simple queries, extended queries, copy operations, etc., for varying degrees of Postgres compatibility.

Taking simple query as an example, this subprotocol doesn't even assume the user's input is SQL. pgwire provides two levels of APIs:

- Low-level API:

SimpleQueryHandler::on_queryfocuses on processing and semantics at the message level. - High-level API:

SimpleQueryHandler::do_queryfocuses on processing and semantics at the data level.

For simple queries, the protocol doesn't even require that the incoming query be SQL.

#[async_trait]

impl SimpleQueryHandler for EchoHandler {

async fn do_query<C>(&self, _client: &mut C, query: &str) ->

PgWireResult<Vec<Response>>

where

C: ClientInfo + Sink<PgWireBackendMessage> + Unpin + Send + Sync,

C::Error: Debug,

PgWireError: From<<C as Sink<PgWireBackendMessage>>::Error>

{

let query = query.to_string();

let f1 = FieldInfo::new("input".into(), None, None, Type::VARCHAR,

FieldFormat::Text);

let schema = Arc::new(vec![f1]);

let data = vec![Some(query)];

let mut encoder = DataRowEncoder::new(schema.clone());

let data_row_stream = stream::iter(data).map(move |r| {

encoder.encode_field(&r)?;

Ok(encoder.take_row())

});

Ok(vec![Response::Query(QueryResponse::new(

schema,

data_row_stream,

))])

}

}Connecting via psql:

❯ psql -h 127.0.0.1 -p 5432 -U postgres

psql (18.1, server 16.6-pgwire-0.38.2)

Type "help" for help.

postgres=# hello world;

input

--------------

hello world;

(1 row)pg_catalog Support

pgwire provides support at the network protocol level. As mentioned earlier, further compatibility requires metadata support, which is where pg_catalog comes in. Since pg_catalog consists of a series of system tables and views, it needs to be built on top of some query engine. As GreptimeDB uses the open-source DataFusion query engine, we maintain the datafusion-postgres project. It acts as an adapter between pgwire, the DataFusion query engine, and the Arrow data format. It includes support for pg_catalog and arrow-pg, which handles conversion from Arrow data to Postgres data. However, since pg_catalog is functionally complex, with many tables deeply tied to the native Postgres mechanism, we focus primarily on a few key tables:

pg_database: Catalog informationpg_namespace: Schema informationpg_tables: Table informationpg_class: Table informationpg_attributes: Column information

Some database management tools, like DataGrip, send very complex pg_catalog queries during startup, which may involve queries or UDFs not supported by DataFusion. This adaptation work requires dedicated effort. We welcome contributions to the development of datafusion-postgres!

Presenting datafusion-postgres at the DataFusion San Francisco meetup

Conclusion

We have implemented Postgres compatibility as reusable libraries. This means that not only GreptimeDB, but any other modern data infrastructure can quickly adapt to this protocol and build a "Postgres-like" ecosystem. Isn't that a new kind of "Postgres" in itself?

Besides GreptimeDB, pgwire is also used in PeerDB (acquired by ClickHouse), SpacetimeDB designed for real-time online games, the open-source project corrosion by fly.io, and the recently released db9.ai, among others. On DataFusion, we can also integrate geoarrow and geodatafusion to build a PostGIS-compatible ecosystem.

If you're also interested in building this new Postgres ecosystem, feel free to join the development of related projects or use these tools directly to realize your own ideas.