Case Study

How Li Auto deployed an on-vehicle time-series database to collect raw data continuously, slash upload costs, and unlock proactive vehicle diagnostics.

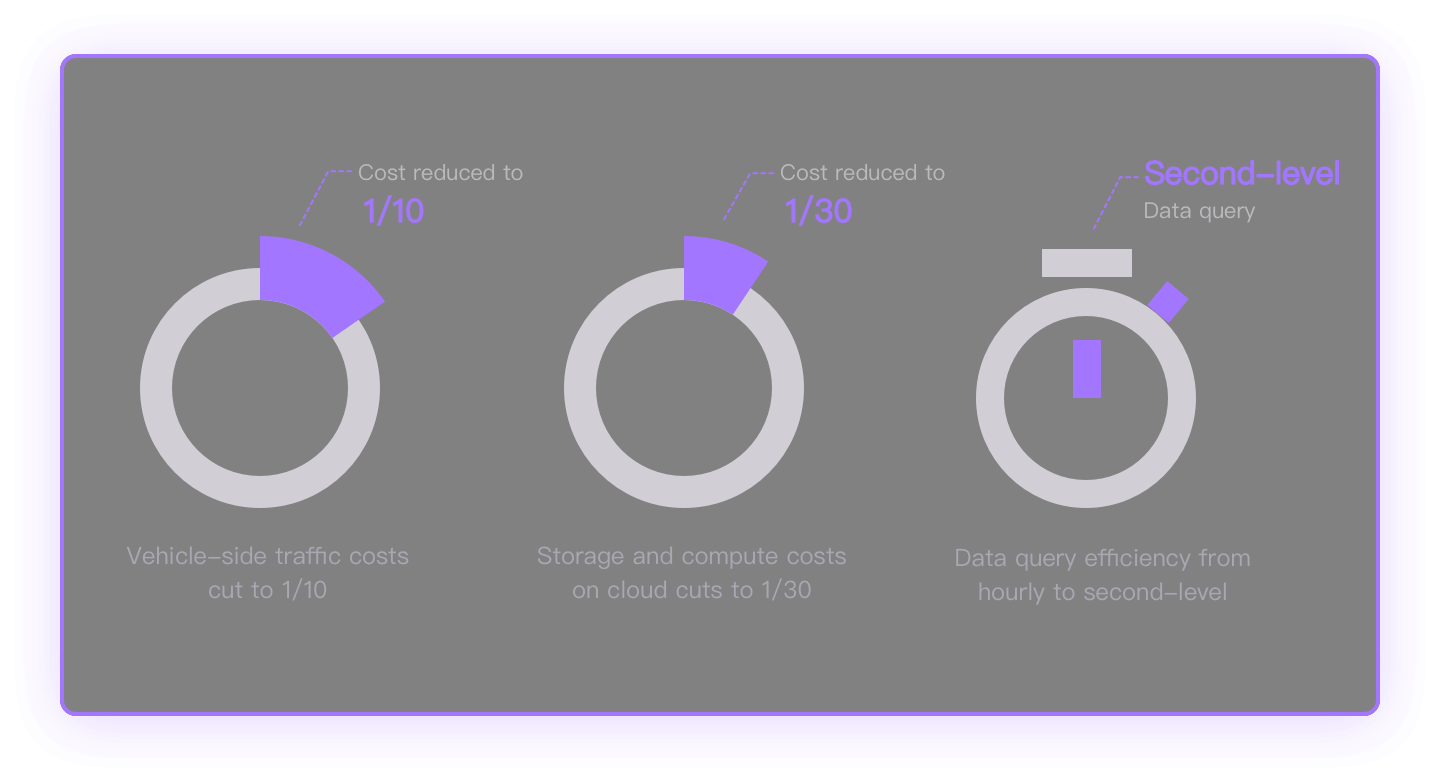

Reduces network traffic by up to 97% through intelligent edge-cloud sync

Cuts cloud storage costs by up to 98% with object storage optimization

Enables real-time edge computing for enhanced performance and data privacy

Provides comprehensive data management with built-in governance and monitoring

GreptimeDB Edge is optimized for resource-constrained edge environments, enabling local data processing and analytics with high compression. Direct synchronization to cloud object storage reduces bandwidth costs while maintaining query capabilities across edge and cloud.

Based on GreptimeDB Enterprise's cloud-native architecture with compute-storage disaggregation, optimized for edge-cloud scenarios. Combines SQL analytics with broad ecosystem compatibility (MySQL, monitoring protocols, visualization tools). Built on object storage for elastic scalability and cost efficiency, with optimized edge-cloud architecture for high-performance data ingestion and processing at scale.

A unified control plane that streamlines edge-cloud data operations with integrated model management, quality monitoring, and task orchestration capabilities.

Real questions from automotive engineering teams.

Validated vehicle-side benchmarks (test hardware: Qualcomm 8295 automotive-grade SoC):

In Li Auto's production deployment, memory is configured at roughly 100MB (case study). Resources can be capped via database config and OS-level limits, and a monitoring API exposes CPU and memory in real time. Heavy analytical queries are time-sliced and auto-aborted when they would push CPU too high, so navigation and cockpit apps stay responsive.

Vehicle bus CAN data compresses 30–40× versus traditional ASC files. Millisecond signal data shrinks an additional 30–50% on top of the customer's existing compression. On-vehicle logs compress to roughly 1/30 of the raw log size — about 50% smaller than traditional compression algorithms. In Li Auto's deployment, cloud storage cost dropped to about 20% of the prior solution and upload bandwidth was cut by over 50%, saving tens of millions in cumulative cloud costs (case study). Compression strategy is per-table — high-frequency telemetry and cold logs can use different codecs.

Three layers of reliability:

Two timestamps per row: the wall-clock time supplied by the writer (which can jump if RTC or GPS corrects) and a monotonic uptime timestamp that only increases. The cloud joins them in SQL to reconstruct a consistent timeline. At ingestion, timestamps from different ECUs are normalized against a chosen reference (typically GPS or NTP), so cross-domain traces align on the same axis.

Yes. The vehicle-side database ships a full SQL engine with low-overhead point queries and supports user-defined functions for custom business logic. Common patterns:

See the Integrations Overview for the full list.

Edge devices (including vehicles) are licensed by device count. The cloud side is licensed per node.

End-to-end savings come from three places: 30–40× compression cuts storage and bandwidth; vehicle-side aggregation removes the need for separate stream-processing engines like Flink in the cloud; and the vehicle-cloud isomorphic architecture skips the secondary write into cloud storage. Li Auto's deployment (case study) dropped cloud storage cost to about 20% of the prior solution and cut upload bandwidth by over 50%.

For a tailored quote, contact us.

Vehicle-side migration cost is generally low: GreptimeDB supports a wide range of open protocols and offers edge-optimized ingestion paths for high-throughput telemetry. The cloud side uses standard SQL, so existing analytics and BI tools connect directly. Overall migration cost is manageable, and typical PoC timelines run 1 to 3 months — the exact effort depends on the specifics of the customer's existing solution.

Specialized edge-cloud integration with automotive-grade reliability

Unified API across edge and cloud environments

97% network reduction and 98% storage cost savings

Local edge processing with intelligent sync

Stay in the loop

Get the latest updates and discuss with other users.