TimescaleDB Alternative: Why GreptimeDB for Time-Series and Observability

Introduction

TimescaleDB has been a standard answer for time-series on PostgreSQL since 2017. Hypertables, continuous aggregates, and full SQL compatibility keep it useful for mixed relational and time-series workloads. The trade-offs show up at scale: multi-node distributed hypertables were sunset in v2.13, and the current scaling model is single-node with the Hypercore columnar engine (community edition since v2.18).

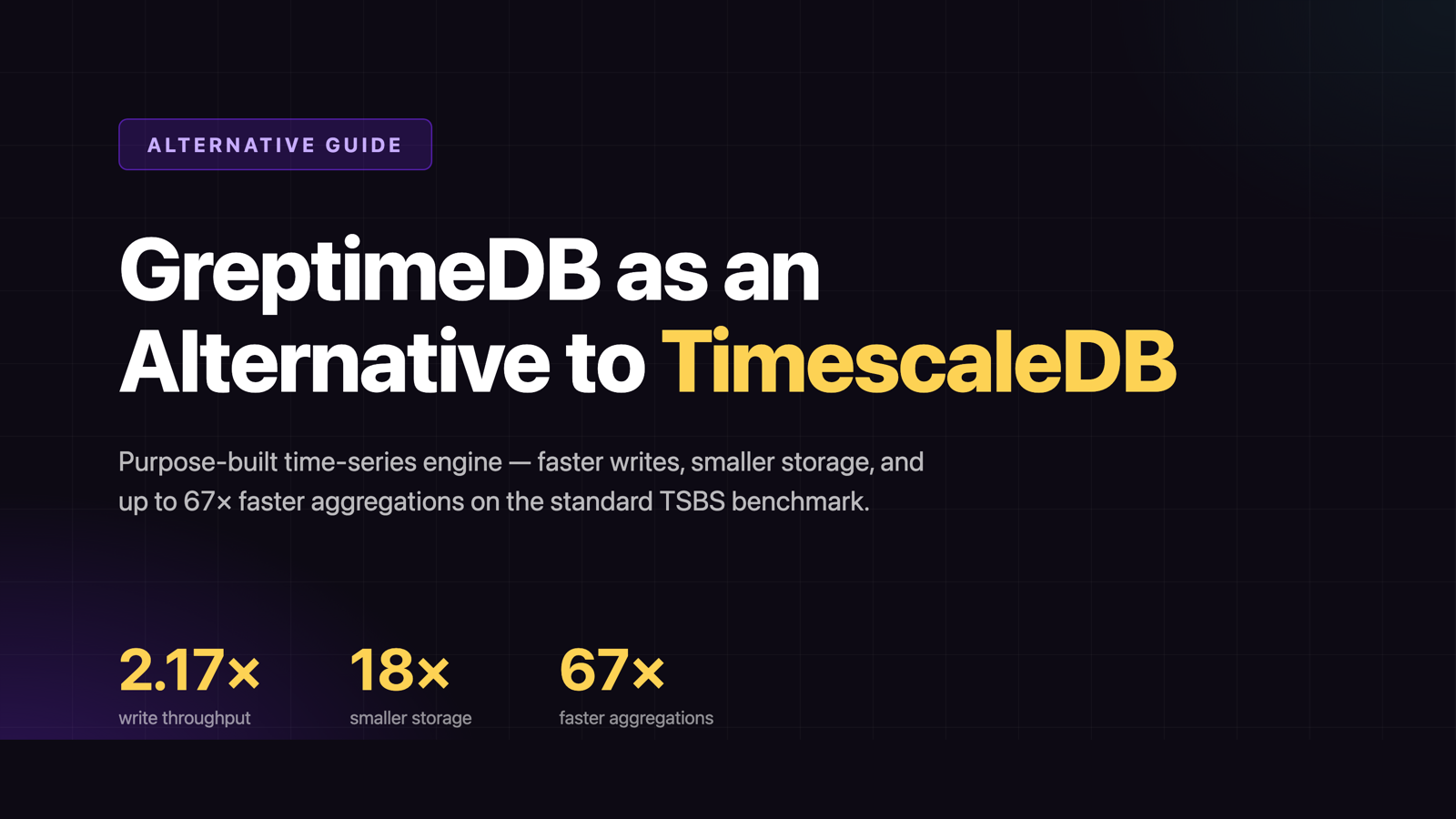

GreptimeDB takes a different starting point — purpose-built for time-series and observability, written in Rust, with object storage as the primary storage layer and a stateless compute tier on top. In a TSBS benchmark on standard hardware (AWS c5d.2xlarge, cpu-only scenario, 4,000 hosts over 3 days), GreptimeDB ingested at 2.17× TimescaleDB's throughput, used 1/18 of the storage, and ran 13 of 15 query types faster — up to 67× on large-range aggregations.

This article walks through where GreptimeDB is a direct TimescaleDB alternative for time-series and observability workloads, and where TimescaleDB still has the edge.

PostgreSQL Extension vs. Purpose-Built TSDB

TimescaleDB is a PostgreSQL extension. Hypertables partition data into chunks by time and (optionally) space, and chunks are stored as regular PostgreSQL tables. Compression is opt-in at the chunk level, layered on top of PostgreSQL's row-based heap, with the Hypercore columnar engine available in the community edition since v2.18. Multi-node distributed hypertables were sunset in v2.13, so the current scaling story is a single PostgreSQL instance with Hypercore on top.

GreptimeDB is built around an LSM-tree storage engine, Mito, with columnar Apache Parquet SST files written directly to object storage. Tables are partitioned into Regions; a Region's data lives in S3, GCS, or Azure Blob, and migration is a metadata operation rather than a bulk copy. Frontend ingestion nodes are stateless — adding write capacity is a matter of adding pods.

The practical difference shows up once a dataset outgrows what a single PostgreSQL instance handles comfortably. GreptimeDB scales horizontally without a single-node ceiling, and storage cost is decoupled from compute cost.

TSBS Benchmark Snapshot

The benchmark used TSBS, the time-series benchmark suite originally built by the TimescaleDB team. Both databases ran on AWS c5d.2xlarge (8 vCPU, 16 GB RAM, 300 GB gp3 SSD), with GreptimeDB v1.0.0-beta.2 and TimescaleDB 2 on PostgreSQL 17. Data was generated with the same seed for both engines, so each loaded identical rows.

| Metric | TimescaleDB | GreptimeDB | Delta |

|---|---|---|---|

| Write throughput | 131,531 rows/s | 285,301 rows/s | 2.17× |

| Storage on disk | 20 GB | 1.1 GB | 18× |

cpu-max-all-8 (8h max, all hosts) | 6,012 ms | 89 ms | 67× |

single-groupby-1-1-12 (12h, 1 host, 1 metric) | 571 ms | 9 ms | 62× |

lastpoint (latest per host) | 131 ms | 1,131 ms | TimescaleDB 8.7× |

groupby-orderby-limit (Top N) | 122 ms | 728 ms | TimescaleDB 6× |

The pattern is consistent. Large-range aggregations and multi-host scans favor GreptimeDB, where columnar Parquet, vectorized execution on Apache DataFusion, and time-window organization mean fewer bytes scanned per query. Point lookups and Top-N favor TimescaleDB, where PostgreSQL's B-tree indexes are already well-tuned for the access pattern. Full numbers and reproduction steps are in the benchmark blog.

Object Storage Economics

TimescaleDB's primary storage is local block storage, typically EBS in cloud deployments, with a tiered-storage option that moves cold chunks to S3. Hot data sits on the same disk as the PostgreSQL data files, which keeps query latency predictable but ties storage cost to provisioned SSD pricing.

GreptimeDB writes immutable Parquet to object storage as primary. A multi-tier cache keeps recent data on NVMe for low-latency reads. At current AWS list prices, S3 Standard at ~$0.023/GB/mo sits roughly 3–5× below provisioned SSD EBS (gp3 ~$0.08/GB/mo). Combined with the 18× smaller compressed footprint on the same TSBS workload, the effective storage bill for retained time-series data drops by an order of magnitude. The GreptimeDB OSS product page summarizes this as up to 50× total cost reduction for observability workloads.

The gap compounds for long-retention workloads (a year of fleet telemetry, multi-year compliance archives).

SQL + PromQL Dual Query

TimescaleDB exposes everything through PostgreSQL SQL. That is a strength when the surrounding stack is PostgreSQL-shaped: psql, JDBC drivers, ORMs, BI tools, and Foreign Data Wrappers work unchanged.

GreptimeDB exposes both SQL and PromQL as first-class query interfaces, plus PostgreSQL and MySQL wire-protocol compatibility for existing tooling. For ingestion, it accepts Prometheus Remote Write, InfluxDB Line Protocol, OpenTelemetry OTLP, and Loki Push API.

Practically: a Grafana dashboard that drives PromQL against Prometheus can point at GreptimeDB without rewriting expressions, while ad-hoc analytical queries use SQL against the same tables. Existing Prometheus, InfluxDB, or OpenTelemetry collectors write through unchanged, and migrating a TimescaleDB SQL workflow keeps the SQL surface familiar.

Migration Path

Most TimescaleDB schemas map directly to GreptimeDB:

- Hypertable → time-partitioned table. Tables declare a

TIME INDEXand partition on column values. Time-based retention is set with TTL options. - Continuous aggregates → Flow. GreptimeDB's Flow engine runs incremental, streaming aggregations into materialized output tables — the same shape as a continuous aggregate, defined in SQL.

- Compression policies → automatic. Parquet SST files are compressed on write; no per-table tuning required.

- Wire compatibility. PostgreSQL clients connect over the Postgres wire protocol, so existing

psycopg, JDBC, orpgdrivers work for SQL queries.

The benchmark above used the GreptimeDB TSBS fork to load the same generated dataset into both databases, which is also a working example for one-time bulk migrations from TimescaleDB-shaped data.

When TimescaleDB Still Wins

This is an alternative-for-time-series-and-observability comparison, not a "TimescaleDB is bad" argument. TimescaleDB remains the better fit for:

- Workloads dominated by point lookups and Top-N queries — the

lastpointandgroupby-orderby-limitcases where B-tree indexes matter more than columnar scan throughput. - Deep PostgreSQL ecosystem dependencies — Foreign Data Wrappers, PostGIS, joins across operational and time-series tables in one transaction, transactional

INSERT … RETURNINGpatterns. - Existing PostgreSQL-shaped teams where retraining cost outweighs the throughput and storage gains.

If observability or high-throughput ingestion is not the primary workload, the trade-off is genuinely workload-dependent. The case for GreptimeDB as a TimescaleDB alternative gets strongest when ingestion volume, storage cost, or distributed scaling are the binding constraints.

Conclusion

GreptimeDB is a direct alternative to TimescaleDB for time-series and observability: 2.17× write throughput, 1/18 the storage footprint, and up to 67× faster aggregation queries on the standard TSBS benchmark — without inheriting PostgreSQL's single-node scaling ceiling. SQL plus PromQL covers both the analytical and the dashboard sides of the workload, and object-storage-first economics change the cost curve for long-retention data.

See the full GreptimeDB vs. TimescaleDB comparison for the feature-by-feature breakdown, or deploy GreptimeDB on Kubernetes to run TSBS against your own dataset.

About Greptime

GreptimeDB is an open-source, cloud-native database purpose-built for real-time observability. Built in Rust and optimized for cloud-native environments, it provides unified storage and processing for metrics, logs, and traces — delivering sub-second insights from edge to cloud at any scale.

GreptimeDB OSS – The open-sourced database for small to medium-scale observability and IoT use cases, ideal for personal projects or dev/test environments.

GreptimeDB Enterprise – A robust observability database with enhanced security, high availability, and enterprise-grade support.

We're open to contributors — get started with issues labeled good first issue and connect with our community.

Stay in the loop

Join our community

Get the latest updates and discuss with other users.